Cross-validation

Cross-validation

# Cross-validation

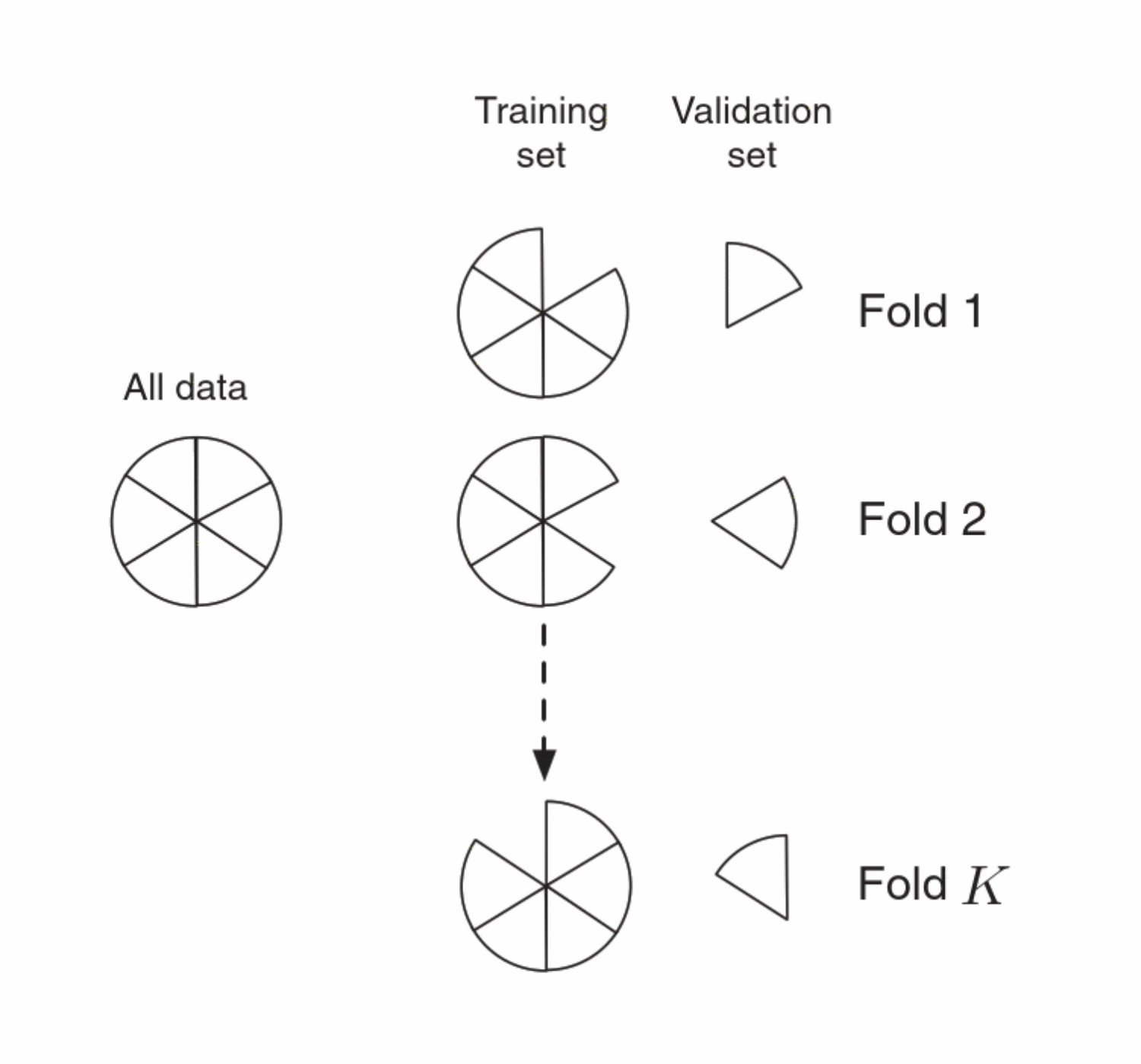

The loss that we calculate from validation data will be sensitive to the choice of data in our validation set. This is particularly problematic if our dataset (and hence our validation set) is small. Cross-validation(CV) is a technique that allows us to make more efferent use of data we have. There are two type of CV, one is Leave One Out Cross-validation(LOOCV) and K-fold Cross-validation.

What it does: Estimate the error of a number of possible models trained on data subsets.

notes

LOOCV is the case of Cross-Validation where just a single observation is held out for validation.

# Procedure

- Randomly partition data into k chunks of (approx.) equal size;

- “hold out” one chunk as the Test Set;

- Train on everything but that chunk;

- Test with the chunk (record performance).

- Repeat this for all chunks.

- Report the average performance.

# References

Rogers, Simon, and Mark Girolami. A First Course in Machine Learning, CRC Press LLC, 2016. ProQuest Ebook Central, http://ebookcentral.proquest.com/lib/uaz/detail.action?docID=4718644.